Any Lawful Use —

Follow the Algorithm

Who controls the algorithmic war?

OpenAI, Google, AI and Pentagon, handing over all control of their AI models. Then Google invests forty billion dollars in the only company that refused to do so. OpenAI seals its military deal hours before a war begins. And a federal judge calls it all «Orwellian retaliation».

OpenAI, Google, AI and Pentagon: on February 27, 2026, at 5:01 PM Eastern Time, a deadline expired.

On one side stood Pete Hegseth Secretary of War under the second Trump administration, a department that, by executive order, no longer calls itself «Defense» but «War».

On the other stood Dario Amodei, co-founder and CEO of Anthropic, the AI company behind Claude: the first frontier AI system ever cleared to run on classified US military networks.

Amodei refused to yield. «We cannot in good conscience agree to their request,» he wrote. At 5:01 PM, time was up. Donald Trump ordered every federal agency to halt commercial dealings with Anthropic. Hegseth, moreover, designated the company a «supply chain risk» — the same label applied to Huawei and ZTE.

Meanwhile, across Silicon Valley, OpenAI’s Sam Altman was announcing a classified deal with the Pentagon. That agreement, according to later reconstructions, was signed hours before the United States launched military operations against Iran.

This is the story of how artificial intelligence became, within just a few months, the modern equivalent of nuclear capability — a resource the state treats as exclusively its own. At the same time, paradoxically, it is the story of how the market is betting billions on the only company that said no.

Act I. Before Everything Changed

To understand what happened on February 27, 2026, we need to go back to July of the previous year. That month, Anthropic signed a $200 million contract with the Pentagon. Claude became the first frontier AI model ever approved to operate on classified US military networks.

However, the contract contained two hard limits that Anthropic insisted on. Claude could not be used for mass surveillance of American citizens. Nor could it be integrated into fully autonomous weapons systems. These restrictions were negotiated, agreed upon, and signed. For months, military operations continued under those terms without incident.

AI and Pentagon — The Platform Shift

Then, in December 2025, Secretary Hegseth announced GenAI.mil: a centralized AI platform for the Department of War. Initially, the platform ran on Google Gemini. The following month, Hegseth added xAI — Elon Musk’s company — stating publicly that he wanted to get rid of «woke AI». Sources confirmed the target of that remark was Anthropic.

Furthermore, in February 2026, a critical revelation emerged. Axios reported that Claude had been used in US military operations in Venezuela — without Anthropic’s knowledge or consent. The company announced it would «reassess the partnership». Three weeks later, the ultimatum arrived.

In February 2026, Axios revealed that Claude had been deployed in US military operations in Venezuela — an application Anthropic had not been informed about. The company stated it would «reassess the partnership». Three weeks later, the ultimatum arrived.

Act II. AI and Pentagon: The Clause War

«Any lawful government purpose» sounds like a reasonable formula. In practice, however, it hides a radical power shift. It strips the model’s developer of any right to control how the system is used. Only the military chain of command decides what falls within that category.

The phrase sounds harmless — bureaucratic, inevitable. It does not say «mass surveillance». It does not say «targeting». It does not say «autonomous weapons». It simply says: if the government considers it legal, the system may be used.

The problem, therefore, is not only legal. It is political. In wartime, the meaning of «lawful» is often decided inside classified structures, with secret information, compressed timelines, and limited public oversight. The clause does not eliminate ethics. It subordinates them.

«The machine did it coldly.»Israeli intelligence officer on the Lavender system, quoted in the +972 Magazine investigation

AI and Pentagon — The Lavender Precedent

The Lavender system, used by the Israeli military in Gaza to identify potential Hamas combatants, compiled a database of 37,000 suspects. Its documented error rate was 10 percent. On an operational scale, that translates to thousands of people struck by mistake. In a pipeline processing a thousand targets per day, a 10 percent error rate means one hundred errors — every single day.

AI and Pentagon — The Rubber Stamp

The Maven Smart System allowed a single analyst to process up to eighty targets per hour. That speed, however, made meaningful human verification technically impossible. The «human oversight» cited in contracts was shrinking into what researchers call «rubber stamping» — automatic ratification of algorithmic output, where an operator spends fewer than twenty seconds approving each target.

In other words, the most advanced form of automation does not replace the human. Instead, it turns the human into a validator. The operator remains «in the loop» — but the loop has already been compressed beyond recognition. When the machine produces a recommendation in seconds, when the system aggregates signals no human could verify in real time, and when operational pressure demands speed, human supervision risks becoming a signature. Not command. Ratification.

As a result, responsibility dissolves. No single node in the chain owns the error — not the software vendor, not the analyst, not the commanding officer.

Act III. The Price of Dissent

The government’s response to Anthropic’s refusal was both swift and disproportionate. The supply chain risk designation — invoking Title 10, Section 3252 of the US Code, a statute designed to exclude compromised foreign vendors like Huawei — had the practical effect of isolating Anthropic from the entire US defense ecosystem.

The Court Pushes Back

Nevertheless, Anthropic fought back in court. On March 26, federal judge Rita F. Lin granted a preliminary injunction. In 43 pages, she wrote that the government’s actions appeared aimed not at protecting national security, but at punishing a company for speaking publicly. She used the word «Orwellian».

«The Department of War’s documents show that Anthropic was designated a supply chain risk because of its ‘hostile attitude through the press.’ Punishing Anthropic for bringing the government’s contractual position to public attention is classic illegal retaliation under the First Amendment. […] Anthropic has shown that these sweeping punitive measures were likely unlawful and that it is suffering irreparable harm.»

On April 8, the DC Circuit denied Anthropic’s emergency stay. The stated reason: «ongoing armed conflict». Consequently, Anthropic was permitted to work with civilian agencies — but not with the Pentagon.

Act IV. OpenAI, Google, AI and Pentagon — The Forty-Billion-Dollar Paradox

On April 24, 2026, less than two months after signing a Pentagon deal that surrendered all ethical veto power over its AI models, Google announced an investment of up to forty billion dollars in Anthropic. Ten billion immediately; thirty billion more tied to commercial milestones.

Google hands the Pentagon every ethical veto over its AI models. Then it invests forty billion dollars in the only company that defended those vetoes — in court, against the same government.

The same hands sign both documents.

OpenAI and Google — Two Timelines, One Strategy

For Google, there is no contradiction. The two moves operate on different time horizons. In the short term, the Pentagon partnership secures cloud contracts. In the long term, holding a significant stake in Anthropic means having a foot in the door of the model that could win the AI race. Google, therefore, does not choose between compliance and dissent. It buys exposure to both.

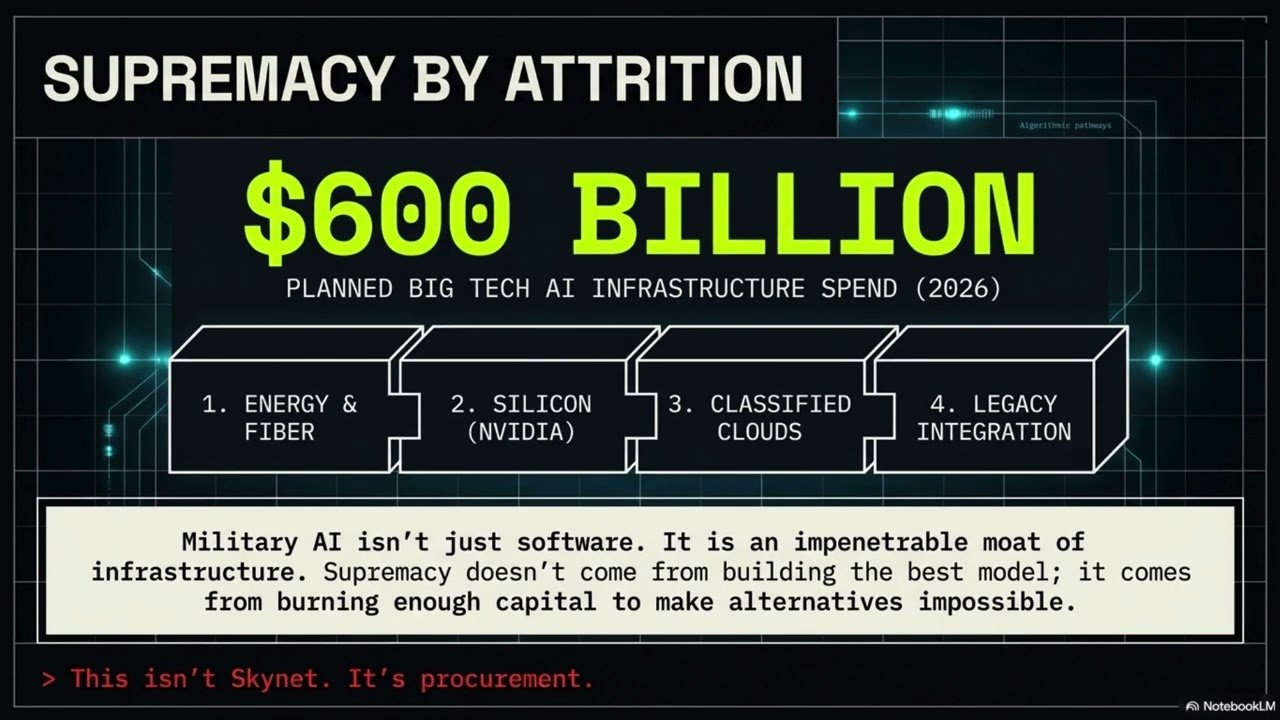

OpenAI and Google — Why Capital Is the Weapon

AI supremacy does not come from the best model. It comes from whoever can burn enough capital to make every alternative impossible. Military AI is not just software. It is energy, fiber, silicon, access to classified networks, cleared personnel, and integration with legacy systems. Each billion spent creates dependency. Each model deployed on a classified network creates lock-in. Each «lawful use» clause creates a space where responsibility can slide from node to node — and belong to no one. This is not Skynet. It is procurement.

Google signs with the Pentagon, surrendering every ethical veto over its AI models.

Then it invests $40 billion in the only company that defended those vetoes — in court, against the same government.

The same hands sign both documents.

Act V. AI and Pentagon — The Unanswered Question

One scene keeps coming up in AI safety researchers’ accounts: the Stanislav Petrov moment. On September 26, 1983, the Soviet automated missile warning system detected five incoming American nuclear missiles. Petrov chose not to trigger the alarm. He judged — against what the system was telling him — that it was a false alert. He was right. The world did not end.

An AI system would not have hesitated. Large language models generate the most probable next output given a context. They have no capacity to doubt their own data. The «fog of war» is not a problem AI solves. Rather, it is a problem AI amplifies — replacing human uncertainty with algorithmic certainty.

AI and Pentagon — Who Is Liable?

None of the contracts signed by the Pentagon with Google, OpenAI, or xAI answers the key question: if an AI system recommends a strike that turns out to be based on false information, who is legally responsible? For now, the answer is: no one.

AI and Pentagon: Big Tech Agreements at a Glance

| Company | Status | Key clause | Ethical limits |

|---|---|---|---|

| Signed | «Any lawful purpose»; no corporate veto | Generic commitment, subordinate to the Pentagon | |

| OpenAI | Signed | Cloud-only; proprietary safety stack; OpenAI staff in the loop | No domestic surveillance, no autonomous weapons — but final decision rests with the government |

| xAI / Grok | Signed | Integration for mission planning | Full alignment |

| Anthropic | In litigation | $200M contract rescinded after refusal | No surveillance, no autonomous weapons: the only red lines |

Epilogue

On February 27, 2026, as Hegseth’s countdown expired and OpenAI signed its Pentagon deal, part of the US government was already planning military operations against Iran. That night, the war started. Claude was not in the chain of command. OpenAI was.

On May 1, 2026, the Department of War announced agreements with eight companies — SpaceX, OpenAI, Google, NVIDIA, Reflection, Microsoft, AWS, and Oracle — to bring advanced AI capabilities to the highest-level classified networks. The stated goal: an «AI-first» military force. The supply chain is now complete. Models. Cloud. Chips. Satellites. Classified networks. Infrastructure.

The central question of this investigation — who decides when an algorithm may operate in a war zone, and who answers when it is wrong — still has no answer. The contracts do not answer it. The laws do not answer it. The courts are working on it. The war, however, does not wait.

Follow the algorithm. You will find a chain of responsibility — distributed with surgical precision — that leads nowhere.

Primary sources

- CNBC, «Anthropic loses appeals court bid to temporarily block Pentagon blacklisting», April 8, 2026

- Mayer Brown, «Pentagon Designates Anthropic a Supply Chain Risk», March 27, 2026

- CNN Business, «Anthropic rejects latest Pentagon offer», February 27, 2026

- Electronic Frontier Foundation, «The Anthropic-DOD Conflict», March 26, 2026

- TechPolicy.Press, «A Timeline of the Anthropic-Pentagon Dispute», updated April 2026

- Bloomberg / CNBC, «Google to invest up to $40 billion in Anthropic», April 24, 2026

- Atlantic Council, «The Anthropic standoff reveals a larger crisis of trust over AI», March 26, 2026

- +972 Magazine / The Guardian investigation on the Lavender system, 2024

- Stanford / MIT study on algorithmic escalation in wargames, 2024

- Order of Judge Rita F. Lin, Northern District of California, March 26, 2026

Interview and analysis on the Anthropic–Pentagon dispute: the case that redraws the line between Big Tech and military power.