The (Dystopian) Revolution of Automation

2025 has been celebrated as “the year of AI agents.” But what does it really mean when a Large Language Model stops being a text generator and becomes a system that plans, acts, calls tools, iterates? And above all: is this transformation a technical revolution—or yet another illusion of algorithmic control?

AI agents are no longer academic prototypes or conference demos. They’re infrastructure. They’re workflows. They’re decisions making other decisions. And adoption is accelerating: major 2025 surveys suggest that a substantial share of organizations is already experimenting with or scaling agents, while a relevant portion says they’ve already deployed them on real processes.

The critical point isn’t “how good they are.”

It’s what happens when an autonomous system perceives, reasons, and acts without truly distinguishing between a fact and a statistical probability. Even when hallucinations drop below 1% on “grounded” tasks, that 0.7% inevitably becomes an incident… if you run it at scale in production.

From software to cognitive system: the structural mutation of AI agent automation

An AI agent is a software system that uses advanced language models, memory (including persistent memory), reasoning capabilities, and access to external tools to pursue complex goals with varying degrees of autonomy. Unlike traditional chatbots, agents maintain context, plan action sequences, call APIs, coordinate systems, and complete tasks independently.

But let’s call it what it is: this “autonomy” remains limited and fragile. When we say “autonomous agent,” we’re often describing a system that generates its own prompts for next steps or writes code for APIs—not an entity that understands the world. As multi-step complexity increases, the error surface grows: wrong tools, invented parameters, broken chains, plausible-but-false results.

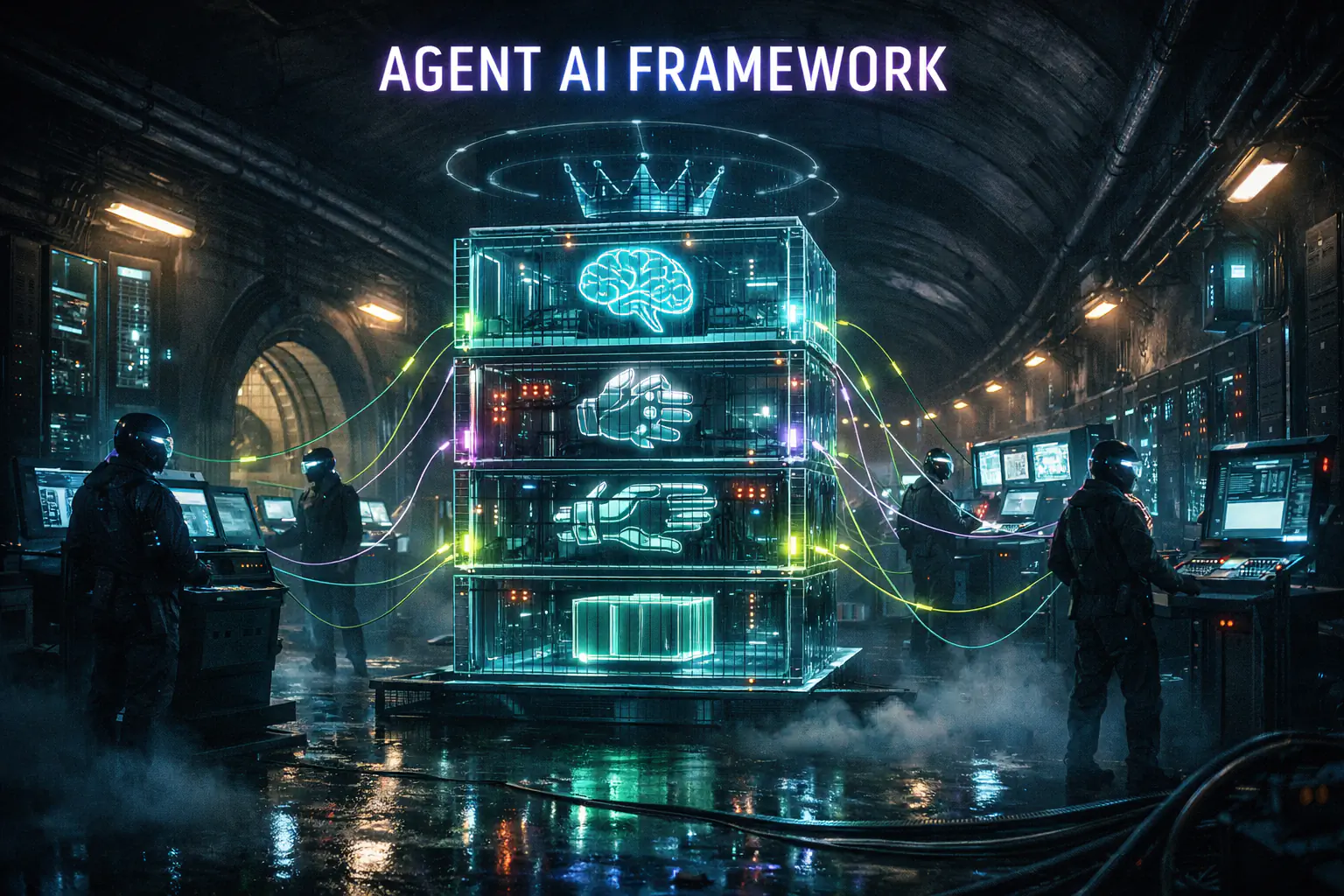

Agent anatomy: the four pillars of the system — AI agent automation

Every powerful agent stands on four components that turn reasoning into action:

Orchestration: the executive mind. Decides what action to take, when to call a tool, how to handle errors, retries, and alternative paths. If orchestration is weak, the agent looks smart but collapses as soon as it leaves the script.

Model: the brain. An LLM breaks down goals, creates plans, handles ambiguity. But it doesn’t “know” facts: it predicts tokens consistent with statistical patterns. Meaning: it can be wrong in a perfectly convincing way.

Tools: the hands. APIs, functions, connectors. Without tools the agent is conversational; with tools it becomes operational (databases, ticketing, email, booking, finance, industry). And here’s the uncomfortable truth: the more tools you give an agent, the more failure points you create.

Runtime: the environment. Infra, deploy, scaling, logging, policy, observability. You’re not “launching a model”: you’re managing a system that interacts with other systems, often non-deterministic.

AI agent automation and dystopia: the reliability problem

Hallucinations aren’t a sporadic bug: they’re a systemic effect of how generative models produce text. The problem explodes with agents because an error doesn’t stay inside an answer: it becomes an action. And an action can propagate (writing data, opening incidents, sending communications, generating “official” reports).

Grounding tries to anchor outputs to verifiable sources: RAG, GraphRAG, agentic retrieval. But even when benchmarks show strong improvements on tasks anchored to a document, this does not eliminate risk in high-impact domains (legal, finance, healthcare). It reduces probability—it does not erase responsibility.

Operational rule: if the output can become an irreversible action, you need human-in-the-loop, logging, audit trails, and least-privilege policies. The agent should never be able to do more than what you’d be willing to sign off on.

Agent frameworks: the architecture of collaboration

The market for AI agent frameworks has exploded: LangChain/LangGraph (modular and graph-based), AutoGen (conversational multi-agent), CrewAI (roles and operational “teams”), Semantic Kernel and Agent Framework (enterprise integration). The choice is not neutral: it determines constraints, failure points, observability, and technical debt.

In 2025, two standards emerged to reduce fragmentation:

- MCP (Model Context Protocol): a standard to connect apps/agents to tools and data sources in a reusable, interoperable way.

- A2A (Agent-to-Agent Protocol): a protocol for agent-to-agent communication—discovery, capability exchange, task delegation—even across different stacks.

AgentOps: from demo to production (the real abyss)

Prototyping an agent is easy. Making it reliable is a different evolutionary species. AgentOps brings DevOps/MLOps principles into the agentic world: testing, evaluation, reproducible deployment, monitoring, feedback loops.

Evaluation can’t stop at the final output: you need tests for non-LLM components (tools), trajectory analysis (Reason → Act → Observe), outcome evaluation, and system monitoring (latency, tool errors, drift, escalation, user feedback).

Security and responsibility: who’s accountable when the agent fails?

Security isn’t just text “guardrails.” It’s infrastructure governance: IAM, least privilege, auditing, observability. And above all legal responsibility: real cases have already shown that the company remains responsible for wrong information provided by a chatbot/agent in its official channels.

In 2025 the issue became visible even outside tech: public reports corrected after invented citations; defamation claims linked to hallucinated outputs; disputes over “invented” customer-care policies. These aren’t edge cases. They’re the obvious consequence of non-deterministic systems put into production without discipline.

The autonomy paradox

The more autonomy you give an agent, the harder it becomes to verify every step. How do you audit a sequence of 10 actions, 5 APIs, 3 databases, 2 retries, and a perfect “final” answer? How do you explain to an auditor why the agent chose that exact path? Debugging emergent behavior isn’t a log—it’s a systemic diagnosis.

AI agent automation and dystopia: the future isn’t autonomous, it’s hybrid

AI agents aren’t just hype: they’re a real paradigm shift. But it’s not what vendors sell. It’s not “full autonomy.” It’s a new form of automation: hybrid, where the machine amplifies and the human governs. It only works with rigor: policy, evaluation, observability, responsibility.

2025 is “the year of the agent.” 2026 is the year of truth: when pilots become critical infrastructure and errors stop being demo failures and become real-world damage.

Want to keep going? Another piece to exit the feed and break the automation script.

Read moreAI agent automation — Sources and further reading

- The state of AI in 2025: Agents, innovation, and transformation — Alex Singla et al., McKinsey (5 Nov 2025)

- Google Cloud Study: 52% of executives say their organizations have deployed AI agents — Google Cloud Press Corner (4 Sep 2025)

- Overview of Agent Development Kit (ADK) and Vertex AI Agent Engine — Google Cloud Documentation (updated 8 Jan 2026)

- Introducing the Model Context Protocol (MCP) — Anthropic (25 Nov 2024)

- Model Context Protocol — Specification (draft) — modelcontextprotocol.io

- Announcing the Agent2Agent Protocol (A2A) — Google Developers Blog (9 Apr 2025)

- Vectara Hallucination Leaderboard (HHEM) — dataset and methodology — GitHub (Vectara)

- Next generation of Vectara’s Hallucination Leaderboard — Vectara Blog (19 Nov 2025)

- Air Canada: company liability for incorrect info provided by chatbot (Moffatt v. Air Canada) — American Bar Association, Business Law Today (29 Feb 2024)

- Deloitte: partial refund after errors and fabricated citations in a public report — Associated Press (Oct 2025)

- Walters v. OpenAI — legal notes on defamation and hallucinated outputs — Loeb & Loeb (May 2025)

- Gartner: over 40% of agentic AI projects may be canceled by 2027 — Gartner Press Release (25 Jun 2025)