Neutral AI: The Trillion dollars Lie

“Neutral AI: the trillion lie” is a phrase that circulates like a reassuring slogan. However, behind this promise lies an industry worth tens of trillions—through capitalization, investments, and expectations. This is not just about software; it’s about power: infrastructures that decide who can compute, narratives that shape desires and fears. As a result, AI becomes a political-industrial construct embedded into culture.

In other words, AI is not neutral because it reflects those who build it. It’s not a floating object. To better understand this, just observe the rivalry between Mark Zuckerberg and Sam Altman.

Neutral AI: Altman vs. Zuckerberg

Zuckerberg builds hardware, interfaces, data centers—he believes in controlling the material stack. Altman tells stories: AGI for humanity, global alliances, public demos. Seemingly different, but both aim for the same goal: dominate the AI ecosystem by turning consensus into cultural lock-in.

This strategy thrives on a narrative machine: conferences, press releases, flashy model launches. The more the story is repeated, the more the illusion of neutrality takes root.

What does “neutral” really mean?

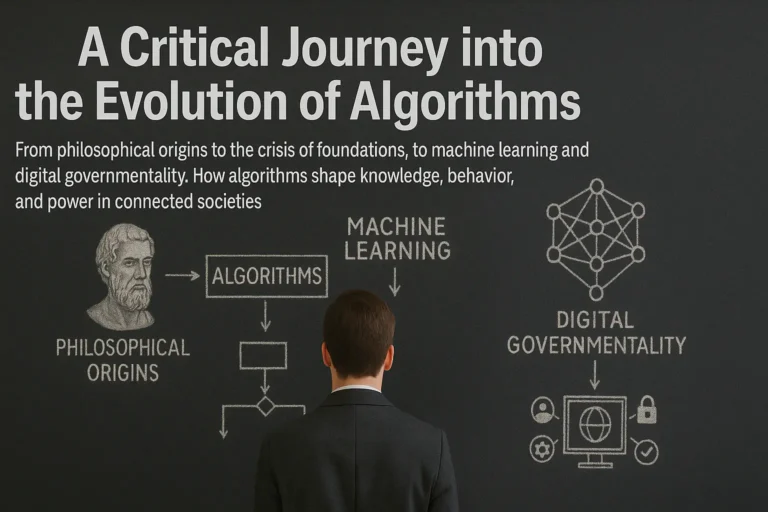

Every model is born of human choices: what to include, what to exclude, how to weigh data. These decisions are shaped by media, regulation, and markets. Furthermore, datasets don’t represent the world—they reflect what’s visible, profitable, and allowed.

When these datasets meet compute power, they form a probabilistic reality: what is frequent becomes plausible, then desirable, then normal. In short, what appears neutral is often just repetition.

This feedback loop is self-reinforcing. If something “performs,” the algorithm shows it more. Media amplify it. Future datasets find it more valid. And so on.

The Myth of Neutral AI

The myth of neutral AI pushes public debate onto false tracks: “open vs closed,” “safe vs dangerous.” Meanwhile, the real issue remains hidden: who controls the compute and writes the story.

In fact, power today is not measured in followers, but in compute per researcher: the threshold that determines who can ask questions, run experiments, and produce truth. Those without access don’t shape reality—they consume it.

Narrative becomes infrastructure

In today’s information ecosystem, APIs, models, policies, and interfaces fuse into private sovereignties. A well-timed announcement shifts markets, persuades regulators, and reframes the debate.

This isn’t paranoia—it’s strategy. “Safety” means reputation management. “Openness” is often a partial window, just enough to sustain community trust—never enough to redistribute control.

You are not the user. You are the training data.

Every prompt, photo, and voice clip becomes raw material. If AI were neutral, reclaiming that data would be frictionless. But it’s not: dark patterns, asymmetric disclosures, continuous extraction. Neutrality is a strategic label.

Can Europe Act?

Often seen as a brake, Europe may hold a key: regulation as a standard. Just as GDPR had global effects, the AI Act could be a geopolitical lever.

However, without public compute, auditable datasets, and mandatory interoperability, regulation becomes just a label on someone else’s infrastructure. We need common tools and real access to computing power.

The AI Bubble

Some believe the AI bubble will burst and fix things. But bubbles destroy the weak and crown the strong. What remains after each crisis are the structures and myths.

This is why “AI is neutral: the $30 trillion lie” is not hyperbole—it’s a diagnosis. Those trillions represent the infrastructural depth of the phenomenon and its power to reshape culture.

The End of Free AI

If we want to use the digital without being used, we must dismantle the neutrality assumption. Data is not neutral. Compute has a cost. Stories open or close possibilities.

We need daily choices—open formats, local identities, interoperable services—but also public compute, independent research, and democratic control over data. This isn’t anti-innovation. It’s re-legitimizing it.

In conclusion, the AI revolution isn’t raining down from the future—we’re writing it right now. As long as we call it “neutral,” we’ll keep surrendering pieces of reality. The first act of resistance is to name it for what it is: a power architecture. From there, we negotiate, demand, and build alternatives.