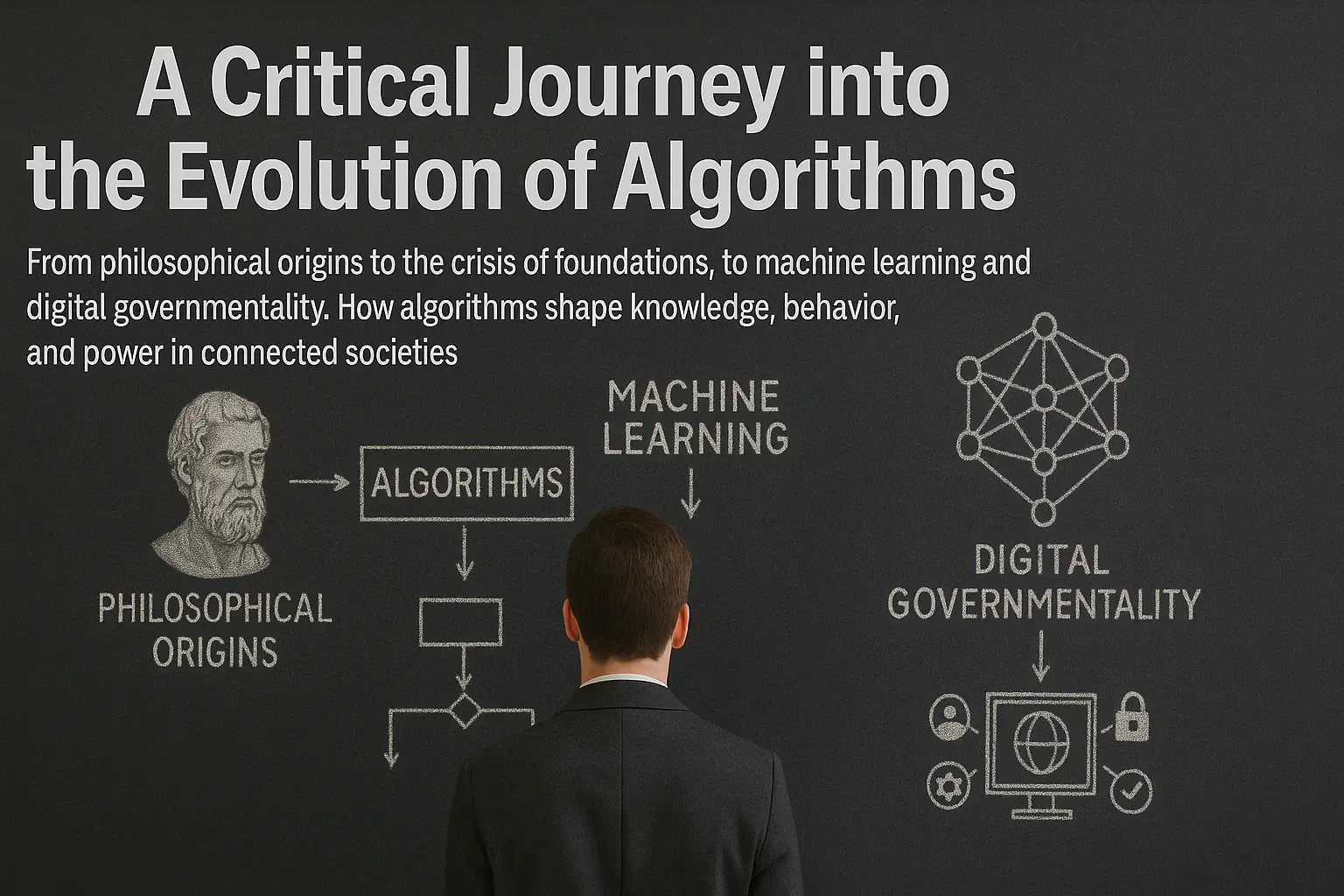

ALGORITHM

From Leibniz’s algorithms to AI logic

In the 17th century, the philosopher Gottfried Wilhelm Leibniz imagined the Characteristica Universalis: a universal language capable of reducing every reasoning to a calculable operation. “Calculemus!”, he said: instead of disputing, let us calculate. This rationalist dream – the mechanisation of thought – crosses three centuries of history to materialise in the computational devices that now govern our lives. But what has materialised is not Leibniz’s universal rationality: it is a particular rationality, embedded in the power relations of digital capitalism.

What are algorithms?

An algorithm is a finite sequence of unambiguous instructions that transforms an input into an output through defined computational steps. Its fundamental properties are:

- Definiteness: each instruction must be precise and unambiguous

- Finiteness: the sequence must terminate after a finite number of steps

- Effectiveness: each operation must be mechanically executable

- Determinism: given the same input, the output is always identical

The term derives from the Persian mathematician Muhammad ibn Mūsā al-Khwārizmī (9th century), whose arithmetic treatises codified systematic procedures for solving classes of problems. Before becoming code, the algorithm was technique: a method, not yet a theory.

The Crisis of algorithms Foundations

In 1928, David Hilbert explicitly revived the Leibnizian project by formulating the Entscheidungsproblem, the decision problem. Hilbert sought to prove that there exists an algorithm capable of mechanically determining, for any well-formed mathematical statement, whether it is true or false. If such an algorithm existed, Leibniz’s dream would be realised: mathematical truth would become a matter of automatic calculation.

But in 1931, Kurt Gödel’s incompleteness theorem shattered this programme by showing that in any sufficiently powerful formal system there exist true propositions that cannot be proven within the system itself. A few years later, Alonzo Church’s thesis confirmed the “logical undecidability of first-order logic”: there is no universal algorithm capable of determining the logical correctness of every numerical expression.

Algorithms can calculate only recursive functions – complex problems solved by decomposing them into simpler problems chained together. A problem is decidable only when it can be encoded into a decidable language.

Turing and the Universal Machine

In 1936, Alan Turing reduced computability to a mechanical procedure. His “halting problem” demonstrates the impossibility of knowing in advance whether an algorithm will eventually stop or continue indefinitely. Turing nonetheless introduced a crucial epistemological shift: it is not necessary to prove the internal formal logicality of a system in order to obtain meaningful answers. It is enough to have correct answers relative to the reference parameters.

Thus we get the Church–Turing thesis: ideally, there exists a Turing machine for every algorithm capable of manipulating symbols. The universal Turing machine – an ideal computer with a potentially infinite memory tape – can simulate human cognitive analysis and reach the limits of computation. The elaboration processes do not coincide between machines and humans, but machines can simulate cognitive processes correctly, achieving analogous results.

Within this theoretical framework, Space and Time emerge as measures of computational complexity: time as the number of instructions executed, space as the amount of memory used. These are the fundamental limits of computation: not all problems are solvable within reasonable time and space.

From Abstraction to Materialisation

The Turing machine was a mathematical abstraction. In 1945, John von Neumann designed the architecture that turned it into an electronic computer: the intuition of the stored-program concept meant that algorithmic instructions could be stored as manipulable data. The algorithm became software.

In 1948, Claude Shannon founded information theory, demonstrating that any information can be encoded into binary bits (0 and 1). The bit became the elementary unit through which algorithms manipulate digitised reality.

From the 1950s to the 1980s, algorithms remained scientific and military tools: nuclear simulations, cryptography, ballistic calculations. With the personal computer in the 1980s–90s they entered homes but became opaque: graphical interfaces separated users from the underlying logic. Clicking an icon hides thousands of lines of code. The algorithm became invisible precisely as it became ubiquitous.

PageRank and the government of algorithms

In 1998, Google’s PageRank algorithm transformed web search. It was no longer the user who decided which information was relevant: the algorithm classified, ranked, and rendered information visible or invisible. This gave rise to algorithmic governmentality – the power to organise access to knowledge through computational procedures that present themselves as neutral, objective, mathematically necessary.

Recommender systems introduced a qualitative mutation: the algorithm no longer retrieves what the user is looking for, it predicts what the user will want. Behavioural profiling becomes the core business of digital capitalism.

Machine Learning: Opaque Simulation

From the 2010s onwards, machine learning marked a rupture. Classical algorithms are explicit instructions written by programmers. Machine-learning algorithms learn patterns from massive datasets without the programmer specifying the rules. Neural networks are “trained” on millions of examples until they develop predictive capabilities.

A facial-recognition algorithm contains millions of parameters (synaptic weights) progressively optimised during training. The result is a black box: a system that works, but whose internal reasoning no one – not even its creators – can fully trace.

This opacity is structural (irreducible complexity with billions of parameters) and strategic (trade secret). Paradoxically, we return to Hilbert’s problem inverted: we now have algorithms that solve complex tasks, but we cannot formally prove why they work nor guarantee that they will always work.

Four Functions of algorithms

Contemporary algorithms operate through:

- Classification: recognising patterns to categorise entities (faces, texts, behaviours). Content moderation, surveillance, credit scoring.

- Prediction: probabilistic estimation of future events based on statistical correlations. Predictive policing, advertising targeting, insurance priced on risk profiles.

- Optimisation: finding the configuration that maximises an objective function. Dynamic pricing, social-feed curation, just-in-time logistics.

- Generation: producing synthetic content (text, images, audio) that imitates learned patterns. Generative AI such as GPT, DALL-E, Midjourney.

In all these cases, the algorithm is not a neutral tool but a socio-technical actor that produces reality: it decides who sees what, who gets credit, who is stopped by the police, what price is offered to which customer.

New algorithms

Contemporary algorithms do not implement “pure rationality”: they implement situated rationality, embedded in specific economic and political goals. Facebook’s algorithm does not maximise user well-being but “time on platform”, because attention-time is the commodity Facebook sells. Amazon’s algorithm does not optimise customer satisfaction but profit, through personalised pricing and ranking.

The critical question becomes: which rationality is being mechanised – and in whose interest?

Bias and the Appearance of Objectivity

Algorithms present themselves as objective, mathematically necessary. But this supposed objectivity hides design choices and embedded values. Algorithmic bias is not a malfunction but a structural consequence: if a hiring algorithm discriminates against women, it is because it was trained on historical data that already reflect discrimination. The algorithm automates and amplifies prejudice while presenting it as a neutral technical decision.

As Cathy O’Neil points out, oppressive algorithms are opaque, operate at scale, and harm people without offering them any real possibility of appeal.

Concentration of Computational Power

Producing advanced algorithms requires enormous computing resources, massive datasets, and specialised expertise. This concentrates algorithmic power in a few corporations (Google, Meta, Amazon, Microsoft in the West; Alibaba, Tencent, Baidu in China), with three key effects:

- Strategic opacity: proprietary algorithms protected as trade secrets, removed from democratic accountability

- Data extractivism: mass surveillance as an operational precondition

- Network effects: those with more users have more data, therefore better algorithms, therefore attract more users – self-reinforcing monopolies

Algorithms and Governmentality

Drawing on Foucault, Antoinette Rouvroy describes algorithmic governmentality as a form of power that governs through prediction and pre-emption. No longer disciplining bodies after the fact, but anticipating future behaviours on the basis of statistical correlations and intervening before events occur.

Predictive policing arrests “potential criminals”. Credit-scoring systems deny loans before any default happens. Social feeds modulate what we see, shaping preferences and identities. This governmentality produces an algorithmic subjectivation: identities become demographic clusters, behavioural profiles, bundles of probabilities.

Social sorting – the automated categorisation of people into classes of risk, merit, value – becomes a mechanism of systemic discrimination that operates without explicit discriminatory intent. It is the automation of social stratification.

Political Economy of the Algorithm

Algorithms are the means of production of digital capitalism. Shoshana Zuboff speaks of surveillance capitalism: a model built on the extraction of behavioural surplus – data about actions, preferences, emotions – transformed into “prediction products” sold on behavioural futures markets. Algorithms are the machinery of this extraction.

Labour precarity is mediated by algorithms: Uber, Deliveroo, TaskRabbit and similar platforms use algorithms to assign tasks, rate performance, determine pay. The gig worker is subject to algorithmic management 24/7, with no room for negotiation. Digital Taylorism is more pervasive than its analogue predecessor.

Resisting Naturalisation

Algorithms are neither inevitable nor neutral. They are socio-technical artefacts that can be modified, contested, replaced. Algorithmic literacy requires:

- Understanding limits: there are dimensions of experience – contingency, freedom, unpredictability – that structurally exceed algorithmic prediction

- Recognising political choices: every algorithm embeds values and power relations. Ask: who designed it? With which data? Which objective does it optimise? Who benefits?

- Challenging opacity: the right to explanation, independent auditing, and regulation (such as the EU AI Act) are necessary but not sufficient tools

- Building alternatives: open-source projects, democratic algorithmic governance, and non-profit models show that alternatives to platform capitalism do exist

Perhaps the most radical form of resistance is the recovery of the remainder: everything that cannot be coded, quantified, predicted. Error, inefficiency, unpredictability. In a world governed by optimisation algorithms, reclaiming the right to imperfection is a political gesture.

Conclusion: Which Rationality?

If in 1928 Hilbert sought an algorithm to determine mathematical truth, today algorithms produce “truth regimes”: not truth as correspondence with reality, but truth as an effect of power-laden procedures. Google’s algorithm decides what is relevant, Facebook’s algorithm what goes viral, credit-scoring algorithms who is trustworthy. They do not describe reality: they perform it.

The history of the algorithm from Leibniz to the present is the history of how a mathematical abstraction has become an infrastructure of power. The question is no longer whether to use algorithms – they are now an inevitable infrastructure – but rather which rationality they implement, and in whose interest.

If it is true that machines can simulate human thought, it is equally true that humans can refuse to think like machines.

Decoding this rationality, challenging its claims to neutrality, resisting its naturalisation: this is the work to be done.

Further Reading

Box 1: The Halting Problem

Turing’s halting problem shows that there can be no general algorithm which, given any arbitrary program and input, determines whether that program will eventually halt or run forever. It is a fundamental theoretical limit of computation: there exist logically well-formed questions for which no algorithmic procedure of answer exists.

Box 2: Recursive Functions

A recursive function solves a problem by reducing it to a simpler version of itself. Example: the factorial of n (n!) is defined as n × (n − 1)!. The complex problem (computing 5!) is reduced to simpler ones (4!, then 3!, then 2!, then 1!) down to the base case. Algorithms can efficiently compute precisely this kind of function.

Box 3: Black Box and Explainability

A deep-learning model with 175 billion parameters (such as GPT-3) is literally incomprehensible: no human can fully trace why it generates a specific output given a specific input. The field of explainable AI tries to develop techniques to make these systems interpretable, but the trade-off between performance and interpretability remains unresolved.

Related Entries

- Artificial Intelligence: computational systems that simulate cognitive capacities

- Machine Learning: algorithms that learn patterns from data

- Platform: digital infrastructures that mediate social and economic interactions

- Surveillance: systematic collection and analysis of behavioural data

- Big Data: massive datasets analysed algorithmically to extract patterns

- Governmentality: forms of power that govern through the conduct of conduct

Essential Bibliography

- Turing, Alan (1936). “On Computable Numbers, with an Application to the Entscheidungsproblem”.

- Gillespie, Tarleton (2014). “The Relevance of Algorithms”.

- Pasquale, Frank (2015). The Black Box Society.

- O’Neil, Cathy (2016). Weapons of Math Destruction.

- Rouvroy, Antoinette (2013). “The End(s) of Critique: Data-behaviourism vs. Due-process”.

- Zuboff, Shoshana (2019). The Age of Surveillance Capitalism.